Editorial trust

How this article is handled

Prompt Insight articles may use AI-assisted research support, outlining, or drafting help, but readers should still verify time-sensitive details such as pricing, limits, and vendor policies on official product pages.

Review snapshot

What we checked for this guide

This article was written by checking current official guidance and company updates around implanted and assistive brain-computer interfaces, then building a realistic 2030 outlook around medical, accessibility, and computing use cases.

- We treated BCIs as an emerging field with real human trials and real medical potential, not as a solved consumer gadget category.

- The article distinguishes between invasive, non-invasive, and semi-invasive systems because they have very different tradeoffs.

- We kept the 2030 forecast grounded in present-day regulation, clinical testing, and accessibility use cases rather than fantasy-level mind reading claims.

Why it helps

Strong points readers should notice

- The guide explains a complex subject in simple language without flattening the science.

- Readers get a balanced picture of both transformative use cases and serious ethical concerns.

- It is strong for future-tech search intent because it answers practical questions while still feeling current.

Watchouts

Limits worth knowing up front

- The most ambitious BCI promises still depend on clinical, regulatory, and cost barriers being solved.

- Consumer adoption outside healthcare may remain slower than viral headlines suggest.

Official sources used

Pages checked while updating this article

It used to sound impossible.

Control a screen with thought. Move a cursor without touching a mouse. Send a message without speaking. Use brain activity to guide assistive technology.

For a long time, brain-computer interfaces lived in the same category as the most ambitious sci-fi concepts: fascinating, promising, and always just a little too far away to feel real.

That is no longer true.

Brain-computer interfaces, or BCIs, are still early, still complex, and still surrounded by very real ethical and technical questions. But they are not imaginary anymore. They are becoming one of the clearest examples of how AI, neuroscience, sensors, and computing are beginning to merge in practical human-facing systems.

By 2030, BCIs may not be a normal everyday consumer gadget for everyone. But they could become dramatically more visible in medicine, accessibility, rehabilitation, assistive communication, and eventually certain specialized work or computing environments.

That is why this topic matters now.

The future of BCIs is not just about impressive demos. It is about changing the boundary between the human nervous system and the digital world.

If you want the broader AI-companion future after this, read The Rise of AI-Powered Personal Assistants in 2030.

What is a brain-computer interface in simple terms?

A brain-computer interface is a system that translates brain activity into commands that software or hardware can understand.

That sounds technical, but the basic idea is surprisingly clear.

Your brain is constantly producing electrical activity. BCIs try to detect meaningful patterns in those signals and turn them into usable outputs.

That output might be:

- moving a cursor

- selecting letters on a screen

- controlling a robotic limb

- triggering assistive communication

- interacting with software without a keyboard or mouse

The reason BCIs are so powerful is that they can create a direct bridge between intention and action.

For people with paralysis or severe motor impairment, that bridge can be life-changing.

For the broader future of computing, it could eventually reshape how humans interact with machines altogether.

Are BCIs already real, or is this still experimental?

Both statements are true at the same time.

BCIs are absolutely real. They are being researched, tested, and used in serious medical and assistive contexts right now.

At the same time, the category is still experimental in many areas. That is important, because internet headlines often blur the gap between:

- proof of concept

- early clinical trial

- controlled assistive use

- mainstream consumer readiness

Those are not the same thing.

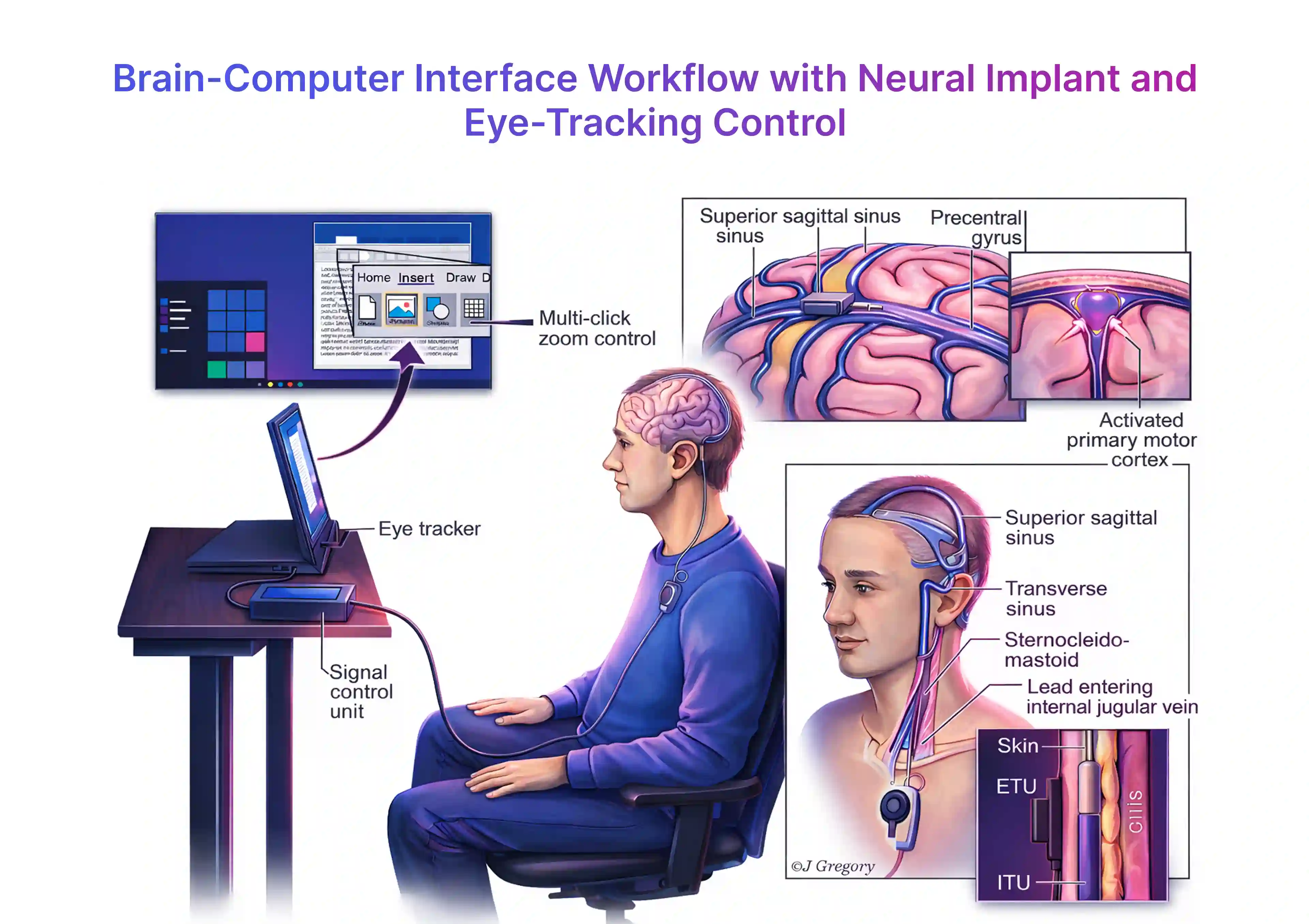

The FDA's public workshop work around implanted brain-computer interfaces makes it clear that regulators are already treating this as an active medical-device field, not a speculative curiosity. Neuralink's PRIME study, Synchron's endovascular BCI approach, and Precision Neuroscience's work all show that multiple models of human neural interface design are being explored right now.

That is why 2030 matters. The next few years will shape whether BCIs remain mostly specialized tools or start becoming a larger computing category.

What are the main types of BCIs?

One of the biggest mistakes people make is treating all BCIs as one thing.

They are not.

1. Invasive BCIs

These are implanted directly into or very near brain tissue.

They typically offer:

- better signal quality

- more precise control

- deeper long-term potential

But they also raise the biggest concerns around surgery, safety, cost, regulation, and long-term maintenance.

2. Non-invasive BCIs

These use external hardware, such as EEG-style headsets, to read brain signals without surgery.

They are generally:

- safer

- easier to deploy

- more accessible for experimentation

But they usually offer lower signal quality and less precision than implanted systems.

3. Semi-invasive or alternative-interface BCIs

These try to balance performance and safety.

Some approaches avoid direct penetration into brain tissue while still seeking stronger signal access than classic external headsets can provide.

That middle space is especially interesting because it may offer a more scalable path for certain medical or assistive uses.

How do BCIs actually work?

Every BCI system is different, but most follow the same basic pipeline.

Step 1: Capture brain signals

The first job is measuring neural activity.

That can happen through:

- external electrodes

- implanted arrays

- vascular or minimally invasive systems

- other sensor designs depending on the device

Step 2: Clean and process the signal

Raw brain signals are noisy.

They must be filtered, organized, and translated into meaningful patterns. This is where machine learning and signal processing become essential.

Step 3: Interpret intent

The system tries to determine what the user intended.

For example:

- did the user mean to move left?

- select a word?

- trigger a device action?

- focus on a target?

Step 4: Execute output

Once the signal is interpreted, the system performs an action.

That might mean moving a pointer, typing text, or controlling assistive hardware.

This is one reason AI matters so much in the future of BCIs. Better models can improve decoding quality, reduce error rates, and help systems adapt to individual users over time.

Why BCIs could become much more important by 2030

The story is not that BCIs will suddenly become normal for everybody.

The story is that several barriers are being tackled at once:

- hardware miniaturization

- better AI signal decoding

- stronger clinical interest

- more public attention

- more investment

- more serious regulatory engagement

That combination matters.

Technologies usually become mainstream only after enough different layers start improving together.

BCIs are beginning to show that pattern.

Where BCIs may have the biggest real-world impact first

Healthcare and accessibility

This is still the clearest and strongest use case.

For people living with paralysis, ALS, spinal injuries, or severe motor loss, BCIs can open up new paths to:

- communication

- digital control

- independence

- environmental interaction

That alone is enough to make the field important.

Even if BCIs never become a mass consumer gadget, they could still change millions of lives by expanding what people with disabilities can do through direct neural interfaces.

Stroke recovery and rehabilitation

Rehabilitation is another major area to watch.

BCIs may help patients retrain movement pathways, interact with assistive systems, or support recovery workflows that become more personalized over time.

Assistive communication

One of the most moving BCI use cases is communication restoration.

If a person cannot speak or type easily, a reliable neural interface can create a new communication path. That is not just a tech upgrade. It is a human dignity upgrade.

Advanced computing environments

Beyond healthcare, BCIs may gradually enter specialized environments where hands-free control matters.

That could include:

- research labs

- advanced simulation systems

- accessibility-first workstations

- certain industrial or defense-adjacent settings

By 2030, the biggest impact may still be targeted and high-value rather than mass-market.

That is still a major shift.

Could BCIs change consumer tech too?

Eventually, yes.

But this is where people need patience.

The idea of sending messages, controlling interfaces, and interacting with augmented reality through thought is incredibly compelling. It also raises enormous challenges around:

- comfort

- accuracy

- safety

- battery

- hardware design

- regulation

- trust

That means the near-term consumer version of BCI may arrive through smaller steps rather than one dramatic leap.

Those steps might include:

- better neurofeedback tools

- attention-aware wearables

- adaptive accessibility systems

- mixed-control interfaces that combine eye tracking, gesture, voice, and neural input

So yes, mind-controlled technology is becoming real, but it may arrive in layers.

What are the biggest benefits of BCIs?

1. Accessibility at a completely different level

This is the biggest one.

BCIs can give people new ways to interact with the world when ordinary physical input methods are unavailable or limited.

2. Faster human-computer interaction in certain contexts

Over time, BCIs could reduce the gap between intention and action.

That would matter in settings where speed, accuracy, or physical limitation changes the value of interaction.

3. Deeper integration between digital systems and human capability

This is where the long-term future gets interesting.

BCIs are not just about medical restoration. They may eventually become part of how humans extend memory, perception, and digital control.

4. More personalized assistive technology

AI-driven BCIs may adapt to individuals more effectively over time, improving the quality of the interface instead of forcing every user into the same rigid design.

What are the biggest risks and concerns?

This field has enormous upside, but it also brings some of the heaviest ethical questions in modern technology.

Privacy

Brain data is not like browsing data.

It is far more intimate.

If BCIs scale, society will need much stronger rules about what neural data can be captured, stored, sold, or analyzed.

Security

Any system connected to software can be attacked.

When that system touches communication or body-driven intent, security stops being a feature issue and becomes a human safety issue.

Consent and autonomy

Who controls the interface?

Who decides how the data is used?

How much transparency does the user get?

Those questions must be solved before BCIs can grow safely.

Inequality

Advanced neural technology could widen access gaps if only wealthy institutions or wealthy users can benefit from the best systems.

Hype distortion

This is a quieter problem, but it matters.

When people oversell BCIs as instant telepathy or full mind reading, they confuse the public and create unrealistic expectations. That can damage trust in a field that already needs careful, patient progress.

What BCIs probably will not do by 2030

It is just as important to say what is unlikely.

By 2030, BCIs may become much more real and much more useful.

But they probably will not:

- become universal everyday consumer fashion tech

- read thoughts in a magical, unrestricted way

- erase the need for speech, touch, or traditional computing interfaces

- resolve every ethical problem around neural data

The actual near-term future is more practical and more meaningful:

BCIs will likely get better at restoring function, enabling communication, and expanding assistive computing.

That alone is revolutionary.

How BCIs connect to the wider future of AI

The future of BCIs is not just a neuroscience story. It is also an AI story.

Why?

Because brain signals are difficult to decode.

AI helps systems interpret noisy data, personalize response models, and improve over time. In other words, AI is not replacing the brain in this equation. It is helping translate the brain to machines more effectively.

That is why BCIs and AI are likely to keep developing together.

If you are tracking future tech seriously, you should not think about BCIs in isolation. You should think about them alongside:

- AI assistants

- augmented reality

- smart glasses

- accessibility systems

- wearable computing

That broader convergence is where the real long-term shift may happen.

Final takeaway

Brain-computer interfaces are no longer science fiction, but they are also not a finished consumer revolution.

They are something more interesting than either extreme.

They are an emerging human-interface layer with clear medical value today, strong assistive potential tomorrow, and a realistic chance to reshape how some people interact with technology by 2030.

The most important thing to understand is this:

BCIs do not need to become normal for everyone to become historically important.

If they help people communicate again, control devices again, or regain forms of independence that were previously lost, that is already enough to change the future.

And if the technology keeps improving, the bigger question will not be whether humans can control technology with their minds.

It will be how much of that capability society is ready to trust, regulate, and integrate into everyday life.

Recommended tools

Tools that fit this workflow

Research

Perplexity

Great for research-heavy tasks when you need a quick answer with links you can verify.

AI assistant

ChatGPT

A flexible assistant for drafting, ideation, summarizing, and turning rough notes into usable work.

AI assistant

Gemini

Useful when your workflow already lives inside Google apps and you want AI help across that stack.

FAQ

Frequently asked questions

What is a brain-computer interface?

A brain-computer interface is a system that captures brain signals and translates them into commands a machine can understand.

Are BCIs already real?

Yes. BCIs are already being tested in medical and assistive contexts, especially for communication and device control.

Will BCIs be common by 2030?

They may become much more visible by 2030, especially in healthcare and accessibility, but mainstream consumer use is still less certain.

What is the biggest benefit of BCIs?

The biggest benefit is giving people new ways to communicate, control devices, or regain function when physical movement is limited.

What is the biggest risk of BCIs?

Privacy and security are major risks because brain data is extremely sensitive and could become one of the most personal categories of data ever captured.